Articles & Case Studies

George Rekouts

Co-Founder & CEO

Outcome Learning Model: SaaS's New Moat

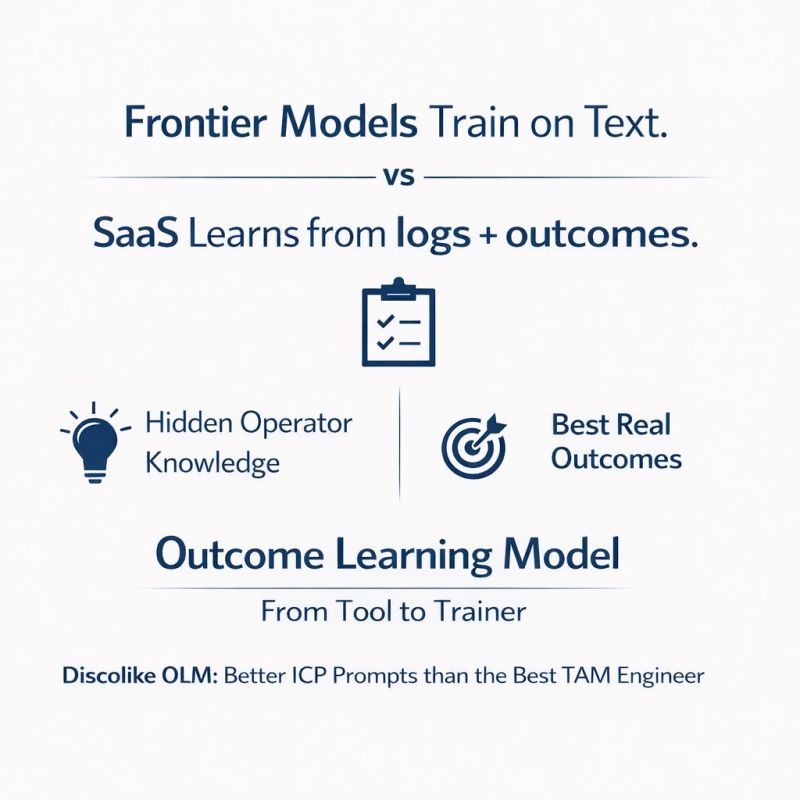

SaaS is not dying. What is dying is the idea that vibe coded apps killed the SaaS moat. An Outcome Learning Model is SaaS’s new moat: a system that captures the strongest operator patterns, validated by real outcomes, that were never described, codified, or published anywhere, and turns them into better guidance, better prompts, better workflows, and eventually better decisions.

The Old SaaS Model Is No Longer Enough

The old SaaS model was simple. Put a UI in front of a workflow, ask users to fill fields, click buttons, run searches, move things through stages, and manually translate judgment into software. That model built huge companies. It is no longer enough.

Claude Code and similar tools made one thing obvious: if your product is mostly interface, logic, forms, and lightweight workflow, it can be replicated frighteningly fast. A weekend project can now produce what used to take a funded team months.

But most people are missing where the moat in SaaS is actually moving.

From Passive Software to Active Learning

The real shift in SaaS is going from passive to active. Passive software records what users do. Active software gets better by learning hidden patterns in users’ successful work.

Real operator knowledge is built through experience. A GTM engineer who has built hundreds of target account lists knows things about ICP segmentation that no playbook captures. A sales ops leader who has tuned thousands of outbound sequences carries intuition that no onboarding doc can transfer. This knowledge is usually never written down, so frontier models cannot train on it and commoditize it.

But SaaS companies have the logs to learn from. They can map user behavior to successful outcomes and extract patterns that no single user could see across the full population.

Google Was the First Outcome Learning Model

Google was one of the clearest early examples of this pattern. It started with backlinks, but over time it built large-scale feedback and evaluation loops on top of link-based relevance. Click-through rates, dwell time, pogo-sticking behavior, and query refinement patterns all fed back into ranking quality.

The moat became the learning loop, not just the original algorithm. A competitor could replicate PageRank. No competitor could replicate the behavioral signal from billions of searches.

Now this same pattern is becoming available to vertical SaaS companies. Products will learn from the link between user behavior and outcomes, and turn that into better decisions for every user.

How DiscoLike Built an Outcome Learning Model

The best new feature we added to DiscoLike is based on exactly this principle. We let models, especially Claude Code, analyze users’ solutions to specific TAM and ICP requests and the resulting TAM mappings. A model can go through thousands of them and extract the best approaches in a way no single human can.

Then the next step gets really interesting. With that knowledge in hand, the system can generate a better ICP prompt than the best TAM engineer can. Not because the base LLM magically knows more, but because the product learned from the relationship between user behavior and real outcomes.

You give it one sentence, the rough ICP description that lives in every founder’s head, and it returns a fully configured dense search vector, ready to return precise matches. The AI TAM Query Assistant is the first feature built on this foundation.

What Vibe Coded Apps Cannot Copy

A vibe coded app can copy your UI, your flow, and a lot of your logic. What it cannot copy is the private outcome training base built from real customer behavior and successful outcomes. Without that, it stays generic, and generic software gets commoditized very fast.

This is the fundamental asymmetry. The knowledge locked inside an Outcome Learning Model comes from:

- Thousands of real user sessions where operators solved hard problems and exported results they were satisfied with

- Cross-user pattern extraction that reveals best practices no individual user could see across the full population

- Outcome validation where the signal is not clicks or time-on-page, but actual business results: exported lists, closed deals, successful campaigns

- Continuous compounding where every new successful outcome makes the model better for the next user

A weekend coding project starts with zero behavioral data and zero outcome signal. That gap does not close with better prompts or faster shipping. It closes with customers and time.

Outcome Learning Model Turns SaaS from a Tool into a Trainer

The Outcome Learning Model turns SaaS from a tool into a trainer. Instead of just giving users a blank canvas and hoping they figure it out, the software actively guides them toward better results based on what worked for users like them.

This is the next evolution of SaaS defensibility. Not just data network effects, where more users create more data. Outcome Learning Models create intelligence network effects, where more successful outcomes create better guidance for every future user.

The companies that build this loop first in their vertical will be extremely difficult to displace, not because their code is better, but because their system has learned things that cannot be replicated without the same history of real customer outcomes.

Frequently Asked Questions

What is an Outcome Learning Model?

An Outcome Learning Model is a system that captures operator patterns validated by real outcomes, patterns that were never described, codified, or published anywhere, and turns them into better guidance, prompts, workflows, and decisions for future users. It learns from the relationship between user behavior and successful results.

How is an Outcome Learning Model different from a data moat?

A traditional data moat protects a company through volume of raw data. An Outcome Learning Model goes further: it maps user behavior to verified successful outcomes and extracts the implicit expertise embedded in those patterns. The moat is not the data itself but the intelligence derived from outcome-validated behavior.

Can AI coding tools like Claude Code replicate an Outcome Learning Model?

AI coding tools can replicate interfaces, workflows, and logic. They cannot replicate the private behavioral corpus built from thousands of real users achieving real outcomes over time. The Outcome Learning Model depends on proprietary customer data that does not exist in any public training set.

Why does this matter for vertical SaaS companies?

Vertical SaaS companies sit on the richest behavioral data in their domain. Every successful search, every exported list, every closed deal creates signal. Companies that build learning loops on top of this data create a compounding advantage that grows with every customer interaction.

Related posts: